Descriptive statistics, probability, and inferential statistics are three essential branches of statistics that help researchers and analysts understand and draw conclusions from data. Let’s solve common 50 interview questions with each of these concepts:

1. Question: What are the main measures of central tendency in Descriptive Statistics?

Sample Answer: The main measures of central tendency are the mean, median, and mode. The mean is the sum of all values divided by the total number of observations and is sensitive to outliers. The median is the middle value of a dataset when it’s arranged in ascending or descending order and is more robust to outliers. The mode is the value that appears most frequently in the dataset.

2. Question: How do you calculate the standard deviation, and what does it indicate about the data?

Sample Answer: The standard deviation measures the amount of variation or dispersion in a dataset. To calculate it, subtract the mean from each data point, square the result, calculate the mean of those squared differences, and finally take the square root. A higher standard deviation suggests that the data points are more spread out from the mean, indicating greater variability in the dataset.

3. Question: Can you explain the concept of skewness in Descriptive Statistics?

Sample Answer: Skewness measures the asymmetry of a probability distribution. If the data is skewed to the right, the tail is elongated towards larger values, and if it’s skewed to the left, the tail is elongated towards smaller values. A perfectly symmetric distribution has a skewness of zero. Understanding skewness is important because it affects the choice of appropriate statistical methods and models.

4. Question: What is kurtosis, and how does it relate to the shape of distribution?

Sample Answer: Kurtosis measures the peakedness or flatness of a probability distribution. A high kurtosis value indicates a more peaked and heavy-tailed distribution, while a low kurtosis value suggests a flatter distribution with lighter tails. Understanding kurtosis helps data scientists determine the level of outliers and potential data abnormalities.

5. Question: How do you handle missing data in Descriptive Statistics?

Sample Answer: Handling missing data is crucial to maintain the integrity of the analysis. Common methods include deleting rows with missing values, imputing missing values using the mean or median, or employing more advanced techniques like multiple imputation. The choice of method depends on the nature of the data and the impact of missing values on the analysis.

6. Question: Describe the difference between a histogram and a bar chart.

Sample Answer: Both histograms and bar charts are used to visualize data, but they are used for different types of data. A histogram is used to display the distribution of continuous numerical data, with the x-axis representing intervals or bins and the y-axis showing the frequency or count of data points within each bin. On the other hand, a bar chart is used to represent discrete data, where each category is represented by a separate bar, and the length of the bar indicates the frequency or count of occurrences.

7. Question: How do you identify outliers in a dataset using Descriptive Statistics?

Sample Answer: Outliers are extreme values that deviate significantly from the rest of the data. One way to identify outliers is by calculating the Z-score of each data point, which measures how many standard deviations it is away from the mean. Data points with Z-scores beyond a certain threshold (e.g., ±3) are considered outliers.

8. Question: When would you use a box plot (box-and-whisker plot) to represent data, and what insights can you gain from it?

Sample Answer: A box plot is useful for visualizing the distribution of data, displaying the median, quartiles, and potential outliers. It helps identify the spread, skewness, and symmetry of the dataset. Additionally, box plots are valuable for comparing multiple groups or categories and identifying any differences in their central tendency and spread.

9. Question: Can you explain the concept of the coefficient of variation (CV) in Descriptive Statistics?

Sample Answer: The coefficient of variation (CV) is a relative measure of variability, calculated as the ratio of the standard deviation to the mean. It is used to compare the variability of different datasets, especially when their means differ significantly. A lower CV indicates less relative variability, making it easier to compare datasets with different scales or units.

10. Question: What is the difference between a population and a sample in Descriptive Statistics?

Sample Answer: In Descriptive Statistics, a population refers to the entire set of individuals or data points under consideration, while a sample is a subset of the population used to draw inferences about the entire population. Sample statistics, such as the mean and standard deviation, are used to estimate population parameters and understand it’s characteristics.

11. Question: How do you check for data normality in Descriptive Statistics?

Sample Answer: Checking for data normality is essential for determining whether parametric or non-parametric statistical methods are appropriate for analysis. Common methods to assess normality include visual inspection using histograms or Q-Q plots, and statistical tests like the Shapiro-Wilk test or the Kolmogorov-Smirnov test.

12. Question: Explain the concept of correlation in Descriptive Statistics and how it is measured.

Sample Answer: Correlation measures the strength and direction of the linear relationship between two continuous variables. It is measured using correlation coefficients, such as Pearson’s correlation coefficient or Spearman’s rank correlation coefficient for non-linear relationships. A positive correlation indicates that the variables move in the same direction, while a negative correlation suggests they move in opposite directions.

13. Question: What is probability, and how is it used in data science?

Sample Answer: Probability is a measure of the likelihood of an event occurring. Data science, helps us make informed decisions in uncertain situations. For instance, in predicting customer churn, I can use probability to estimate the likelihood of a customer leaving based on historical data. This enables businesses to prioritize retention efforts and allocate resources more effectively.

14. Question: Explain the difference between conditional probability and joint probability.

Sample Answer: Conditional probability refers to the probability of an event happening given that another event has already occurred. On the other hand, joint probability is the probability of two or more events happening simultaneously. For instance, in a medical diagnosis scenario, the conditional probability would help determine the likelihood of a patient having a specific disease given certain symptoms, while joint probability would estimate the probability of the patient experiencing multiple symptoms at once.

15. Question: How do you calculate the probability of independent events?

Sample Answer: In the case of independent events, the probability of both events occurring is the product of their individual probabilities. For example, if the probability of flipping a coin and getting heads is 0.5, and the probability of rolling a dice and getting a 4 is 1/6, the probability of both events occurring together is 0.5 * 1/6 = 1/12.

16. Question: What is the difference between discrete and continuous probability distributions?

Sample Answer: Discrete probability distributions are used for events with countable outcomes, such as the number of customers arriving at a store. Continuous probability distributions, like the normal distribution, are used for events with an infinite number of possible outcomes, such as height or weight measurements.

17. Question: How do you calculate the expected value of a discrete probability distribution?

Sample Answer: The expected value, also known as the mean of a discrete probability distribution, is calculated by multiplying each possible outcome by its probability and summing them up. For instance, in a dice roll where each number has an equal probability of 1/6, the expected value is (1 * 1/6) + (2 * 1/6) + (3 * 1/6) + (4 * 1/6) + (5 * 1/6) + (6 * 1/6) = 3.5.

18. Question: What is the law of large numbers, and how does it relate to probability?

Sample Answer: The law of large numbers states that as the number of trials or observations increases, the average of the results will converge to the expected value or true probability. This is important in data science because it reinforces the idea that with more data, our estimates become more accurate and reliable.

19. Question: How do you use Bayes’ theorem in data science applications?

Sample Answer: Bayes’ theorem allows us to update our beliefs or probabilities based on new evidence. In data science, it is used in Bayesian inference, which helps us revise our initial beliefs about model parameters as we collect more data. For example, in medical testing, Bayes’ theorem helps us update the probability of a patient having a disease based on the test results.

20. Question: What is the concept of a sample space in probability theory?

Sample Answer: The sample space is the set of all possible outcomes of an experiment. For example, when rolling a six-sided dice, the sample space is {1, 2, 3, 4, 5, 6}. Understanding the sample space is essential for calculating probabilities and making informed decisions.

21. Question: Explain the difference between mutually exclusive and independent events.

Sample Answer: Mutually exclusive events cannot occur at the same time, meaning if one event happens, the other cannot. For example, when flipping a coin, getting heads and tails are mutually exclusive events. Independent events, on the other hand, are events where the occurrence of one does not affect the probability of the other happening. For instance, rolling a dice and flipping a coin are independent events.

22. Question: What is the principle of inclusion-exclusion, and how is it used in probability?

Sample Answer: The principle of inclusion-exclusion is a counting technique used to calculate the probability of the union of multiple events. It helps avoid double-counting cases and enables us to find the probability of events that overlap. In data science, this principle is useful in calculating probabilities involving multiple conditions or factors.

23. Question: How do you use probability distributions in hypothesis testing?

Sample Answer: Probability distributions play a crucial role in hypothesis testing by helping us assess the likelihood of obtaining certain results under different conditions. For instance, in a t-test, we use the t-distribution to find the probability of observing the difference in sample means if the null hypothesis is true. This probability helps us decide whether to reject or fail to reject the null hypothesis.

24. Question: Explain the concept of a confidence interval and its connection to probability.

Sample Answer: A confidence interval is a range of values within which we believe the true population parameter lies with a certain level of confidence. For instance, a 95% confidence interval suggests that we are 95% confident that the true parameter lies within the interval. The confidence level is connected to probability as it represents the likelihood of capturing the true parameter in repeated sampling.

25. Question: What is inferential statistics, and how is it different from descriptive statistics?

Sample Answer: Inferential statistics involves making predictions or drawing conclusions about a population based on sample data. It uses methods like hypothesis testing and confidence intervals to estimate population parameters. Descriptive statistics, on the other hand, summarizes and describes the main features of a dataset without making inferences.

26. Question: How do you formulate null and alternative hypotheses in hypothesis testing?

Sample Answer: The null hypothesis (H0) is the default assumption that there is no significant difference or relationship between variables. The alternative hypothesis (Ha) represents the assertion we want to test, suggesting that there is a significant difference or relationship. For example, in testing a new drug’s effectiveness, the null hypothesis could be “The new drug has no effect,” while the alternative hypothesis could be “The new drug is effective in reducing symptoms.”

27. Question: Explain Type I and Type II errors in hypothesis testing.

Sample Answer: In hypothesis testing, a Type I error occurs when we reject the null hypothesis when it is true. It represents a false positive, meaning we mistakenly conclude there is a significant effect or relationship. A Type II error happens when we fail to reject the null hypothesis when it is false, resulting in a false negative, indicating we miss a significant effect or relationship.

28. Question: How do you choose the appropriate significance level (alpha) for hypothesis testing?

Sample Answer: The significance level (alpha) is the probability of making a Type I error. It is typically set to 0.05 (5%) in most cases, indicating a 5% chance of rejecting the null hypothesis when it is true. The choice of alpha depends on the consequences of making Type I errors and the level of certainty required for the analysis.

29. Question: What is the p-value, and how do you interpret it in hypothesis testing?

Sample Answer: The p-value represents the probability of obtaining results as extreme as, or more extreme than, the observed results, assuming the null hypothesis is true. If the p-value is less than the significance level (alpha), we reject the null hypothesis. For example, if the p-value is 0.02 and alpha is 0.05, we reject the null hypothesis at a 5% significance level.

30. Question: How do you perform a one-sample t-test, and when is it used?

Sample Answer: A one-sample t-test is used to determine if the mean of a single sample significantly differs from a known or hypothesized value. For example, if we want to test if the average weight of apples in a shipment is 500 grams, we would use a one-sample t-test to assess whether the sample mean significantly deviates from 500 grams.

31. Question: Explain the concept of the Central Limit Theorem and its significance in inferential statistics.

Sample Answer: The Central Limit Theorem states that, regardless of the population’s distribution, the sampling distribution of the sample mean approaches a normal distribution as the sample size increases. This allows us to make valid inferences about population parameters based on the sample mean, making it a foundational concept in inferential statistics.

32. Question: What is a confidence interval, and how do you interpret it?

Sample Answer: A confidence interval is a range of values within which we believe the true population parameter lies with a certain level of confidence. For example, a 95% confidence interval for the mean age of customers could be [25, 35], indicating that we are 95% confident that the true mean age falls between 25 and 35 years.

33. Question: How do you perform a chi-square test, and in what scenarios is it used?

Sample Answer: The chi-square test is used to determine if there is a significant association between two categorical variables. It compares the observed frequencies to the expected frequencies under the assumption of independence. For example, we could use a chi-square test to assess whether there is a relationship between gender and the preference for a specific product.

34. Question: When do you use a one-tailed (one-sided) test versus a two-tailed (two-sided) test in hypothesis testing?

Sample Answer: In a one-tailed test, we are interested in whether the value of a parameter is significantly greater than or less than the hypothesized value. In a two-tailed test, we want to determine if the parameter significantly differs from the hypothesized value in any direction. The choice between the two depends on the research question and the specific hypothesis being tested.

35. Question: Can you explain the concept of degrees of freedom in inferential statistics?

Sample Answer: Degrees of freedom represents the number of independent pieces of information available for calculating a statistic. In a one-sample t-test, the degrees of freedom are (n-1), where n is the sample size. In chi-square tests, the degrees of freedom depend on the number of categories in the variables being compared.

36. Question: How do you handle multiple comparisons in inferential statistics?

Sample Answer: When conducting multiple statistical tests simultaneously, the chance of obtaining false positives (Type I errors) increases. To control for this, we use methods like Bonferroni correction, False Discovery Rate (FDR), or the Benjamini-Hochberg procedure to adjust the significance level for each test, reducing the overall risk of making Type I errors.

37. Question: What are confidence intervals for proportions, and how are they calculated?

Sample Answer: Confidence intervals for proportions estimate the range in which the true population proportion lies with a certain level of confidence. For example, if 150 out of 500 customers prefer a product, the proportion estimate is 150/500 = 0.3. To calculate the confidence interval, we use the sample proportion and the standard error of the proportion.

38. Question: How do you choose between parametric and non-parametric tests in inferential statistics?

Sample Answer: Parametric tests assume that the data follow a specific distribution, such as normal distribution, while non-parametric tests do not make such assumptions. When the data meets the assumptions of parametric tests, they generally have more statistical power. If the data violates the assumptions, non-parametric tests are more appropriate.

39. Question: How do you determine the sample size required for an inferential study?

Sample Answer: Determining the sample size depends on factors like the desired level of confidence, the margin of error, and the variability in the population. Techniques like power analysis or using sample size calculators can help data scientists determine the appropriate sample size to achieve the desired level of precision in their study.

40. Question: What is the ANOVA test, and when is it used in data science?

Sample Answer: The ANOVA test is a statistical technique used to compare the means of two or more groups to determine if there are significant differences between them. It is commonly used when dealing with categorical independent variables and a continuous dependent variable. For example, in a marketing study, we could use ANOVA to analyze the impact of different advertising strategies on sales across multiple regions.

41. Question: How do you interpret the results of an ANOVA test?

Sample Answer: The ANOVA test produces an F-statistic and a p-value. The F-statistic measures the ratio of the variation between group means to the variation within groups. If the F-statistic is significant and the p-value is below the chosen significance level (e.g., 0.05), we reject the null hypothesis and conclude that at least one group mean significantly differs from the others. Post-hoc tests are then conducted to identify which specific group means differ significantly.

42. Question: What is the difference between one-way and two-way ANOVA?

Sample Answer: One-way ANOVA is used when we have one categorical independent variable with three or more groups, and we want to compare their means. On the other hand, two-way ANOVA involves two independent variables, allowing us to study the main effects of each variable and their interaction effect on the dependent variable. Two-way ANOVA is suitable when we have two categorical independent variables and a continuous dependent variable.

43. Question: How do you handle the assumptions of the ANOVA test?

Sample Answer: The ANOVA test assumes that the dependent variable follows a normal distribution within each group and that the groups have equal variances. Additionally, observations are assumed to be independent. To ensure these assumptions are met, data scientists often perform tests like the Shapiro-Wilk test for normality and Levene’s test for equal variances. If the assumptions are violated, transformations or non-parametric alternatives may be considered.

44. Question: Can you provide an example of how you would use ANOVA in a real-world data analysis project?

Sample Answer: Sure! Let’s consider a scenario where a pharmaceutical company is testing the efficacy of three different drugs (Drug A, Drug B, and Drug C) to treat a certain medical condition. The dependent variable is the improvement in the patient’s symptoms, and the independent variable is the drug administered. We collect data from randomized controlled trials for each drug and conduct a one-way ANOVA test to determine if there are significant differences in the mean improvement scores between the three drugs. If the test is significant, we can follow up with post-hoc tests, such as Tukey’s Honestly Significant Difference (HSD) test, to identify which drug(s) show significantly better outcomes compared to others.

45. Question: What is the t-test, and in what scenarios is it used in data science?

Sample Answer: The t-test is a statistical hypothesis test used to determine if there is a significant difference between the means of two groups. It is commonly used when dealing with continuous data and a categorical independent variable with two levels. For example, in a clinical trial, we could use a t-test to compare the average response of patients in a treatment group with those in a control group.

46. Question: Explain the difference between a one-sample t-test and a two-sample t-test.

Sample Answer: In a one-sample t-test, we compare the mean of a single sample to a known or hypothesized value. This is useful when we want to determine if a sample mean significantly differs from a specific value. On the other hand, a two-sample t-test compares the means of two independent groups to determine if there is a significant difference between them.

47. Question: What are the assumptions of the t-test, and how do you check for them?

Sample Answer: The assumptions of the t-test are: 1) The data within each group follows a normal distribution, 2) The variances of the groups are equal (homoscedasticity), and 3) The observations are independent. We can check for normality using visual methods like histograms or statistical tests like the Shapiro-Wilk test. To assess equal variances, we can use tests like Levene’s test or conduct visual inspections, such as scatterplots or boxplots.

48. Question: How do you interpret the p-value in a t-test?

Sample Answer: The p-value in a t-test represents the probability of observing the data or more extreme results, assuming that the null hypothesis is true. A small p-value (typically below the chosen significance level, e.g., 0.05) suggests that the difference between the groups’ means is unlikely to have occurred by chance alone. If the p-value is significant, we reject the null hypothesis and conclude that there is a significant difference between the groups’ means.

49. Question: Can you provide an example of when you would use a paired t-test in data analysis?

Sample Answer: Sure! Let’s consider a scenario where we are evaluating the effectiveness of a weight loss program. We measure the weight of participants before and after the program. Since the same individuals are measured twice (before and after), their data is paired. In this case, we would use a paired t-test to determine if there is a significant difference in weight before and after the program, within the same group of participants.

50. Question: How do you adjust for multiple comparisons in t-tests to control the overall Type I error rate?

Sample Answer: When conducting multiple t-tests simultaneously, the chance of making a Type I error (false positive) increases. To control the overall Type I error rate, we can use methods like the Bonferroni correction, which adjusts the significance level for each individual test. Another approach is to use the False Discovery Rate (FDR) correction, which controls the proportion of false discoveries among all rejected hypotheses.

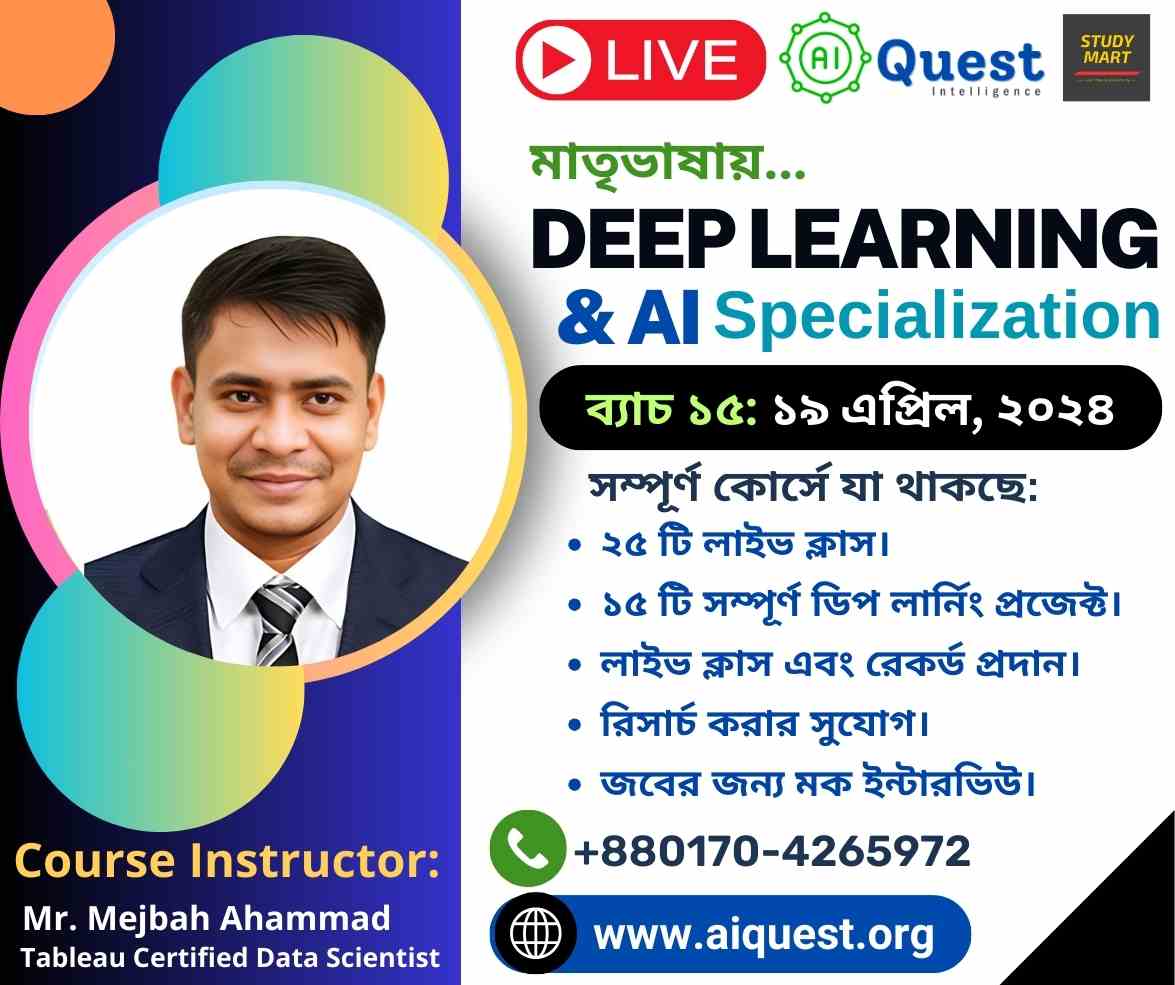

By mastering these statistical topics, data scientists can effectively analyze data, make informed decisions, and build accurate and robust models for various data-driven tasks. Join our course on Statistics for Data Science.

.