Writing an article on data scientist job interview questions and sample answers related to linear algebra topics is a great idea. Below are the 100 most popular questions on each topic along with sample answers:

Vectors and Vector Spaces:

1. Question: What is a vector in linear algebra?

Sample Answer: A vector is a mathematical entity that represents both magnitude and direction. In machine learning, vectors are commonly used to represent data points and features.

2. Question: What are the basic operations of vectors?

Sample Answer: The basic operations on vectors include addition, subtraction, and scalar multiplication. These operations are crucial for data manipulation and transformation in machine learning.

3. Question: Define vector normalization.

Sample Answer: Vector normalization is the process of scaling a vector to have a unit norm (length). It is often used to ensure all vectors are on the same scale for distance-based algorithms like k-nearest neighbors.

4. Question: How do you calculate the dot product of two vectors?

Sample Answer: The dot product of two vectors A and B is calculated by summing the products of their corresponding elements: dot_product = Σ(A[i] * B[i]) for all i.

5. Question: What is a cross-product between two 3D vectors?

Sample Answer: The cross product of two 3D vectors A and B yields a new vector perpendicular to both A and B. Its magnitude is |A| * |B| * sin(θ), where θ is the angle between A and B.

6. Question: Explain the concept of linear independence in vector spaces.

Sample Answer: Vectors are linearly independent if no vector can be represented as a linear combination of the others. Linear independence is essential in avoiding redundant information in datasets.

7. Question: What is the difference between a row vector and a column vector?

Sample Answer: A row vector is a 1xN matrix (N rows, 1 column), while a column vector is an Nx1 matrix (N columns, 1 row). The choice depends on the context and conventions of the problem.

8. Question: How does the dimensionality of a vector space relate to the number of linearly independent vectors?

Sample Answer: In a vector space with dimension N, the maximum number of linearly independent vectors is N. Any set of more than N vectors in that space will be linearly dependent.

9. Question: How do you find the angle between two vectors?

Sample Answer: The angle (θ) between two vectors A and B can be calculated using the dot product formula: cos(θ) = (A · B) / (|A| * |B|).

10. Question: Describe how you would visualize high-dimensional vectors in a 2D or 3D space.

Sample Answer: To visualize high-dimensional vectors, techniques like PCA or t-SNE can be used to reduce the dimensionality while preserving the most important relationships between data points.

Matrices and Matrix Operations:

1. Question: What is a matrix in linear algebra?

Sample Answer: A matrix is a rectangular array of numbers or symbols arranged in rows and columns. Matrices are widely used in representing datasets and transformations in machine learning.

2. Question: Explain the concept of matrix addition and provide an example.

Sample Answer: Matrix addition is performed element-wise, where each corresponding element in two matrices is added together. For example, if A = [1 2; 3 4] and B = [5 6; 7 8], A + B = [6 8; 10 12].

3. Question: How do you perform matrix multiplication?

Sample Answer: Matrix multiplication is done by taking the dot product of rows in the first matrix and columns in the second matrix. The resulting matrix has dimensions (rows of the first) x (columns of the second).

4. Question: What are identity and zero matrices?

Sample Answer: An identity matrix is a square matrix with 1s on the main diagonal and zeros elsewhere. A zero matrix is a matrix where all elements are zeros.

5. Question: What is the transpose of a matrix?

Sample Answer: The transpose of a matrix is obtained by flipping the rows and columns, effectively changing rows to columns and vice versa.

6. Question: How do you find the determinant of a square matrix?

Sample Answer: The determinant of a square matrix is a scalar value representing the scaling factor of the transformation represented by the matrix. It can be computed using various methods, like cofactor expansion or LU decomposition.

7. Question: Explain the concept of the trace of a matrix.

Sample Answer: The trace of a square matrix is the sum of its diagonal elements. It is often used in machine learning algorithms, particularly in quadratic forms.

8. Question: What are orthogonal matrices?

Sample Answer: Orthogonal matrices are square matrices whose rows and columns are orthogonal unit vectors. The inverse of an orthogonal matrix is its transpose.

9. Question: How do you check if a matrix is singular?

Sample Answer: A matrix is singular if its determinant is zero, meaning it is not invertible and does not have a unique inverse.

10. Question: Describe how you would use matrix multiplication for linear regression.

Sample Answer: In linear regression, the model can be represented as y = Xβ, where y is the target variable, X is the matrix of features, and β is the vector of coefficients. The coefficients can be estimated using matrix multiplication and solving the system of equations.

Matrix Decompositions:

1. Question: What is Singular Value Decomposition (SVD)?

Sample Answer: SVD is a matrix decomposition technique that represents a matrix as the product of three matrices: A = UΣV^T. It is used in dimensionality reduction and data compression.

2. Question: How does Principal Component Analysis (PCA) use SVD?

Sample Answer: PCA uses SVD to find the principal components (eigenvectors) of the covariance matrix, which represent the directions of maximum variance in the data. These components can then be used to reduce the dimensionality of the dataset.

3. Question: Explain the concept of Eigenvalue Decomposition.

Sample Answer: Eigenvalue decomposition represents a square matrix A as a product of its eigenvectors and eigenvalues. A = VΛV^(-1), where V is the matrix of eigenvectors, and Λ is the diagonal matrix of eigenvalues.

4. Question: How does the eigenvalue decomposition help in solving systems of linear equations?

Sample Answer: Eigenvalue decomposition can be used to diagonalize a matrix, which simplifies solving systems of linear equations Ax = b, where A is the coefficient matrix, x is the vector of variables, and b is the right-hand side vector.

5. Question: What is the relationship between SVD and eigenvalue decomposition?

Sample Answer: SVD is a generalization of eigenvalue decomposition that works for any m x n matrix, while eigenvalue decomposition is only applicable to square matrices.

6. Question: How can you use SVD for image compression?

Sample Answer: In image compression, an image can be represented as a matrix, and SVD can be used to extract the most significant components (singular values and vectors) to achieve compression while preserving essential features.

7. Question: What are the properties of orthogonal matrices, and how are they used in decompositions?

Sample Answer: Orthogonal matrices have the property that their transpose is equal to their inverse. This property is useful in many decompositions, such as SVD and QR decomposition.

8. Question: Discuss the computational complexity of SVD and other decomposition methods.

Sample Answer: SVD can be computationally expensive for large datasets. Other decomposition methods like QR decomposition may be more efficient in certain scenarios.

9. Question: What is the low-rank approximation of a matrix, and why is it useful?

Sample Answer: Low-rank approximation represents a matrix by retaining only its most significant singular values and corresponding eigenvectors. It is useful in reducing memory and computational requirements while preserving the critical information in the data.

10. Question: How would you use matrix decomposition techniques to detect collinearity in a dataset?

Sample Answer: Matrix decomposition methods like SVD can help detect collinearity by revealing small singular values, indicating that some features are nearly linearly dependent, which can lead to issues in machine learning models.

Systems of Linear Equations:

1. Question: What is a system of linear equations?

Sample Answer: A system of linear equations consists of multiple equations involving linear combinations of variables. Solving this system means finding values for the variables that satisfy all equations simultaneously.

2. Question: How do you represent a system of linear equations in matrix form?

Sample Answer: A system of linear equations can be represented as AX = B, where A is the coefficient matrix, X is the vector of variables, and B is the right-hand side vector.

3. Question: What is Gaussian elimination, and how does it work?

Sample Answer: Gaussian elimination is a method to solve systems of linear equations by performing row operations to convert the augmented matrix [A | B] into row-echelon form and then back-substituting to obtain the solutions.

4. Question: Explain the concept of LU decomposition.

Sample Answer: LU decomposition factorizes matrix A into the product of a lower triangular matrix (L) and an upper triangular matrix (U). It is used to solve systems of linear equations more efficiently than Gaussian elimination.

5. Question: How does LU decomposition help in solving multiple systems with the same coefficient matrix?

Sample Answer: Once LU decomposition is computed for matrix A, solving multiple systems of linear equations with the same A but different right-hand side vectors B becomes computationally efficient since the factorization needs to be done only once.

6. Question: What is the importance of a unique solution in a system of linear equations?

Sample Answer: A unique solution in a system of linear equations indicates that the equations are not redundant and that the variables can be determined uniquely.

7. Question: What are underdetermined and overdetermined systems of linear equations?

Sample Answer: An underdetermined system has more variables than equations, leading to an infinite number of solutions. An overdetermined system has more equations than variables, typically requiring an approximate solution.

8. Question: How do you check if a system of linear equations is consistent or inconsistent?

Sample Answer: A system is consistent if it has at least one solution, and inconsistent if it has no solutions. This is determined by checking if the augmented matrix [A | B] has a unique row-echelon form or if there are any contradictions.

9. Question: Discuss the application of linear regression as a system of linear equations.

Sample Answer: Linear regression can be formulated as a system of linear equations, where the goal is to find the coefficients that minimize the sum of squared residuals between the predicted and actual values.

10. Question: How would you use matrix inversion or decomposition to solve a system of linear equations?

Sample Answer: Matrix inversion or decomposition methods like LU can be used to find the inverse of matrix A, which can then be used to find the solution X by X = A^(-1) * B. However, in practice, these methods are not always preferred due to numerical stability concerns.

Matrix Inversion and Pseudoinverse:

1. Question: What is the inverse of a square matrix, and how is it computed?

Sample Answer: The inverse of a square matrix A is denoted as A^(-1) and satisfies A * A^(-1) = I, where I is the identity matrix. It can be computed using various methods, such as Gaussian elimination or LU decomposition.

2. Question: Under what conditions does a matrix have an inverse?

Sample Answer: A square matrix has an inverse if its determinant is nonzero, which means it is nonsingular or full rank. Singular matrices, those with a determinant of zero, do not have an inverse.

3. Question: What is the relationship between matrix inversion and solving systems of linear equations?

Sample Answer: Solving a system of linear equations AX = B can be done by finding the inverse of A and calculating X = A^(-1) * B. However, directly computing the inverse is often not recommended due to numerical stability issues.

4. Question: What is the pseudoinverse of a matrix, and when is it used?

Sample Answer: The pseudoinverse, denoted as A^+, is a generalization of the matrix inverse that can be computed for non-square or singular matrices. It is used in solving overdetermined systems and least-squares problems.

5. Question: Compare the computation of the matrix inverse and pseudoinverse.

Sample Answer: The matrix inverse requires that the matrix is square and non-singular, and its computation can be expensive for large matrices. In contrast, the pseudoinverse can be computed for any matrix, making it more flexible for various applications.

6. Question: How do you compute the pseudoinverse using Singular Value Decomposition (SVD)?

Sample Answer: The pseudoinverse of a matrix A can be obtained using its SVD: A^+ = VΣ^+U^T, where V and U are the matrices from the SVD, and Σ^+ is the pseudoinverse of the diagonal matrix Σ.

7. Question: Explain the application of pseudoinverse in solving linear regression with an overdetermined system.

Sample Answer: In linear regression with more equations than variables (overdetermined), the pseudoinverse is used to find the least-squares solution, which minimizes the sum of squared residuals.

8. Question: What are the advantages and limitations of using the pseudoinverse?

Sample Answer: The pseudoinverse allows solving least-squares problems and handling singular or underdetermined systems. However, it may not provide unique solutions, and its use should be approached with care to avoid introducing noise.

9. Question: In which scenarios would you prefer to use the pseudoinverse over other methods?

Sample Answer: The pseudoinverse is useful when solving least-squares problems, finding approximate solutions to underdetermined systems, and in applications where matrices may be singular or close to singular.

10. Question: How can you assess the quality of the solution obtained using the pseudoinverse?

Sample Answer: The quality of the solution obtained using the pseudoinverse can be assessed by calculating the residuals (difference between predicted and actual values) and evaluating how well the model fits the data.

Eigenvalues and Eigenvectors:

1. Question: What are the eigenvalues and eigenvectors of a matrix?

Sample Answer: Eigenvalues are scalars that represent the scaling factor of the eigenvectors when a matrix operates on them. Eigenvectors are non-zero vectors that remain in the same direction but might change in length when multiplied by a matrix.

2. Question: How do you find the eigenvalues and eigenvectors of a matrix?

Sample Answer: The eigenvalues can be found by solving the characteristic equation det(A – λI) = 0, where A is the matrix, I is the identity matrix, and λ is the eigenvalue. The eigenvectors are then found by solving (A – λI)v = 0 for each eigenvalue λ.

3. Question: Explain the geometric interpretation of eigenvalues and eigenvectors.

Sample Answer: Eigenvalues represent the scaling factor of the eigenvectors when a matrix operates on them, while eigenvectors represent the directions in which the matrix acts like a simple scalar multiplication.

4. Question: How are eigenvalues and eigenvectors used in Principal Component Analysis (PCA)?

Sample Answer: PCA uses the eigenvectors of the covariance matrix to find the principal components, which are the directions of maximum variance in the data. The corresponding eigenvalues represent the amount of variance explained by each principal component.

5. Question: What is the significance of the dominant eigenvalue in iterative algorithms like PageRank?

Sample Answer: In iterative algorithms like PageRank, the dominant eigenvalue corresponds to the convergence factor, influencing the rate at which the algorithm reaches a steady state.

6. Question: How can you determine whether a matrix is diagonalizable?

Sample Answer: A matrix is diagonalizable if it has N linearly independent eigenvectors, where N is the size of the matrix. If a matrix has N distinct eigenvalues, it is always diagonalizable.

7. Question: What is the relationship between the trace and the sum of eigenvalues of a matrix?

Sample Answer: The trace of a matrix is equal to the sum of its eigenvalues. In other words, tr(A) = Σλi, where λi are the eigenvalues of A.

8. Question: How do you use eigenvalues and eigenvectors in data compression techniques like PCA?

Sample Answer: In PCA, the eigenvalues and eigenvectors of the covariance matrix are used to project the data into a lower-dimensional space while preserving as much variance as possible.

9. Question: Explain the concept of the power iteration method for finding the dominant eigenvalue.

Sample Answer: The power iteration method is an iterative algorithm used to find the dominant eigenvalue and its corresponding eigenvector of a matrix. It repeatedly multiplies the matrix with a random vector and normalizes the result to converge to the dominant eigenvector.

10. Question: How can you use the eigendecomposition of a symmetric matrix to perform spectral clustering?

Sample Answer: In spectral clustering, the eigendecomposition of the similarity matrix is used to obtain the eigenvectors corresponding to the smallest eigenvalues. The resulting eigenvectors are then used for clustering the data points.

Linear Transformations:

1. Question: What is a linear transformation in linear algebra?

Sample Answer: A linear transformation is a function that maps vectors from one vector space to another, preserving addition and scalar multiplication.

2. Question: How does matrix multiplication represent a linear transformation?

Sample Answer: Matrix multiplication can represent a linear transformation by applying the transformation to the input vector.

3. Question: What are the properties of linear transformations?

Sample Answer: Linear transformations preserve vector addition and scalar multiplication, meaning T(a * v + b * w) = a * T(v) + b * T(w) for any vectors v, w, and scalars a, b.

4. Question: How can you determine if a matrix represents a linear transformation?

Sample Answer: A matrix represents a linear transformation if it satisfies the properties of linearity. To check this, ensure that T(Ax) = A * T(x) for any vector x and matrix A.

5. Question: Give an example of a linear transformation from R^2 to R^3.

Sample Answer: An example of a linear transformation from R^2 to R^3 is a transformation that projects points from a plane in R^2 onto a corresponding plane in R^3.

6. Question: What is the relationship between linear transformations and eigenvectors?

Sample Answer: Eigenvectors are special vectors that remain in the same direction after a linear transformation, only scaling by the eigenvalue.

7. Question: How do you use linear transformations in image processing?

Sample Answer: In image processing, linear transformations like rotation, scaling, and translation are used to manipulate and enhance images.

8. Question: Explain the concept of homogeneous coordinates and how they relate to linear transformations.

Sample Answer: Homogeneous coordinates are a representation of points in higher-dimensional space, used to simplify matrix operations for transformations like translation.

9. Question: What is the inverse of a linear transformation, and when does it exist?

Sample Answer: The inverse of a linear transformation is another transformation that undoes the effects of the original transformation. It exists when the transformation is one-to-one and onto.

10. Question: How can you use linear transformations to solve systems of linear equations?

Sample Answer: A system of linear equations can be represented as a matrix equation AX = B, where A is the coefficient matrix, X is the vector of variables, and B is the right-hand side vector. By applying the inverse of A (if it exists) to both sides, you can find the solution for X.

Inner Products and Orthogonality:

1. Question: What is an inner product in linear algebra?

Sample Answer: An inner product is a generalization of the dot product to complex vector spaces. It takes two vectors and returns a scalar, representing the angle between them and their length.

2. Question: What are the properties of an inner product?

Sample Answer: The properties of an inner product include linearity in the first argument, conjugate symmetry, and positive definiteness.

3. Question: How can you compute the angle between two vectors using the inner product?

Sample Answer: The angle (θ) between two vectors A and B can be calculated as cos(θ) = (A · B) / (|A| * |B|), where |A| and |B| are the magnitudes of the vectors.

4. Question: Explain the concept of orthogonality between vectors.

Sample Answer: Two vectors are orthogonal if their inner product is zero, meaning they form a 90-degree angle in the vector space.

5. Question: What are orthonormal vectors, and why are they important?

Sample Answer: Orthonormal vectors are vectors with unit lengths that are orthogonal to each other. They are important in various applications, such as representing bases in vector spaces and performing transformations.

6. Question: How can you use inner products to find the projection of a vector onto another vector?

Sample Answer: The projection of vector A onto vector B can be calculated as (A · B) / (|B| * |B|) * B.

7. Question: Explain the Gram-Schmidt process for orthogonalizing a set of vectors.

Sample Answer: The Gram-Schmidt process is a method to convert a set of linearly independent vectors into a set of orthogonal vectors.

8. Question: How does orthogonality help in solving least-squares problems?

Sample Answer: Orthogonal bases make solving least-squares problems easier, as the coefficients of the projection can be directly computed using inner products.

9. Question: Discuss the use of inner products in defining norms in vector spaces.

Sample Answer: The inner product of a vector with itself is used to define the norm (length) of the vector, as ||A|| = √(A · A).

10. Question: How do you use orthogonal vectors in computing the determinant of a matrix?

Sample Answer: Orthogonal vectors simplify the computation of the determinant of a matrix since the determinant of an orthogonal matrix is either +1 or -1, depending on the orientation of the basis.

Matrix Rank and Null Space:

1. Question: What is the rank of a matrix?

Sample Answer: The rank of a matrix is the maximum number of linearly independent rows or columns in the matrix. It provides information about the dimensionality of the column or row space.

2. Question: How do you calculate the rank of a matrix?

Sample Answer: The rank can be determined by performing row operations to bring the matrix to its row-echelon form and counting the number of non-zero rows.

3. Question: Explain the concept of full rank and rank-deficient matrices.

Sample Answer: A matrix is a full rank if its rank is equal to the number of its rows or columns. It is rank-deficient if its rank is less than the number of its rows or columns.

4. Question: What is the relationship between the rank and the nullity of a matrix?

Sample Answer: The rank-nullity theorem states that the sum of the rank and nullity of a matrix is equal to the number of its columns (or rows).

5. Question: How does the rank of a matrix relate to its inverse?

Sample Answer: A square matrix is invertible (nonsingular) if and only if it has full rank. A rank-deficient matrix is singular and does not have an inverse.

6. Question: How can you use the rank of a matrix to detect collinearity in data?

Sample Answer: If the rank of a matrix representing a dataset is less than the number of its columns (features), it indicates that the features are not linearly independent, suggesting collinearity.

7. Question: Discuss the importance of rank in solving linear systems and in machine learning applications.

Sample Answer: The rank of a matrix plays a critical role in determining whether a system of linear equations has a unique solution. In machine learning, it can help identify important features and reduce dimensionality.

8. Question: How can you find a basis for the null space (kernel) of a matrix?

Sample Answer: To find the basis for the null space, you can solve the homogeneous system Ax = 0 and find all solutions that make the equation hold.

9. Question: What does it mean for a matrix to have full column rank and full row rank?

Sample Answer: A matrix with full column rank means that all its columns are linearly independent, and a matrix with full row rank means that all its rows are linearly independent.

10. Question: How can the rank of a matrix be used to determine the dimensionality of a subspace?

Sample Answer: The rank of a matrix represents the dimensionality of the subspace spanned by its column or row vectors, which can be useful in various machine learning tasks, such as feature selection and data compression.

Positive Definite Matrices:

1. Question: What is a positive definite matrix?

Sample Answer: A positive definite matrix is a symmetric matrix for which all eigenvalues are positive.

2. Question: How do you check if a matrix is positive definite?

Sample Answer: To check if a matrix is positive definite, compute the eigenvalues, and verify if they are all positive.

3. Question: Explain the significance of positive definite matrices in optimization problems.

Sample Answer: In optimization, positive definite matrices are used to ensure that the objective function has a unique minimum and to guarantee the convergence of iterative optimization algorithms.

4. Question: How are positive definite matrices used in machine learning algorithms like Support Vector Machines (SVMs)?

Sample Answer: Positive definite matrices are crucial in SVMs, where they define the kernel matrix, ensuring the positive semi-definiteness of the optimization problem.

5. Question: What is the Cholesky decomposition of a positive definite matrix, and how is it computed?

Sample Answer: The Cholesky decomposition represents a positive definite matrix A as the product of a lower triangular matrix L and its transpose: A = LL^T. It is computed using the Cholesky factorization algorithm.

6. Question: How can positive definite matrices be used for multivariate normal distribution and generating random samples?

Sample Answer: In a multivariate normal distribution, the covariance matrix is positive definite, and the Cholesky decomposition is used to generate random samples from the distribution.

7. Question: Discuss the relationship between positive definite matrices and convexity in optimization problems.

Sample Answer: Positive definite matrices play a crucial role in convexity. If the Hessian matrix of a function is positive definite, the function is convex, ensuring that any local minimum is a global minimum.

8. Question: Can a matrix be positive definite and singular (non-invertible) at the same time?

Sample Answer: No, a positive definite matrix must be nonsingular (invertible) since all its eigenvalues are positive, and a singular matrix has at least one zero eigenvalues.

9. Question: How do you use the positive definiteness of matrices in solving linear systems and optimization problems?

Sample Answer: In linear systems, positive definite matrices ensure that the system is well-conditioned and has a unique solution. In optimization, they ensure the existence of a unique minimum and guarantee convergence.

10. Question: What are the alternatives for positive definite matrices in cases where positive definiteness cannot be guaranteed?

Sample Answer: In cases where positive definiteness cannot be guaranteed, positive semi-definite matrices can be used as an alternative. They have all non-negative eigenvalues, which still guarantees some desirable properties in optimization and other applications.

Remember, while these questions and sample answers can serve as a valuable reference, it’s essential to study and understand the underlying concepts thoroughly to excel in data science job interviews related to linear algebra.

Remember, the key to performing well in a data scientist job interview is not just memorizing answers but understanding the principles and concepts behind the questions. Good luck with your interview!

“Kindly share this valuable resource with your friend to help them secure a job opportunity.”

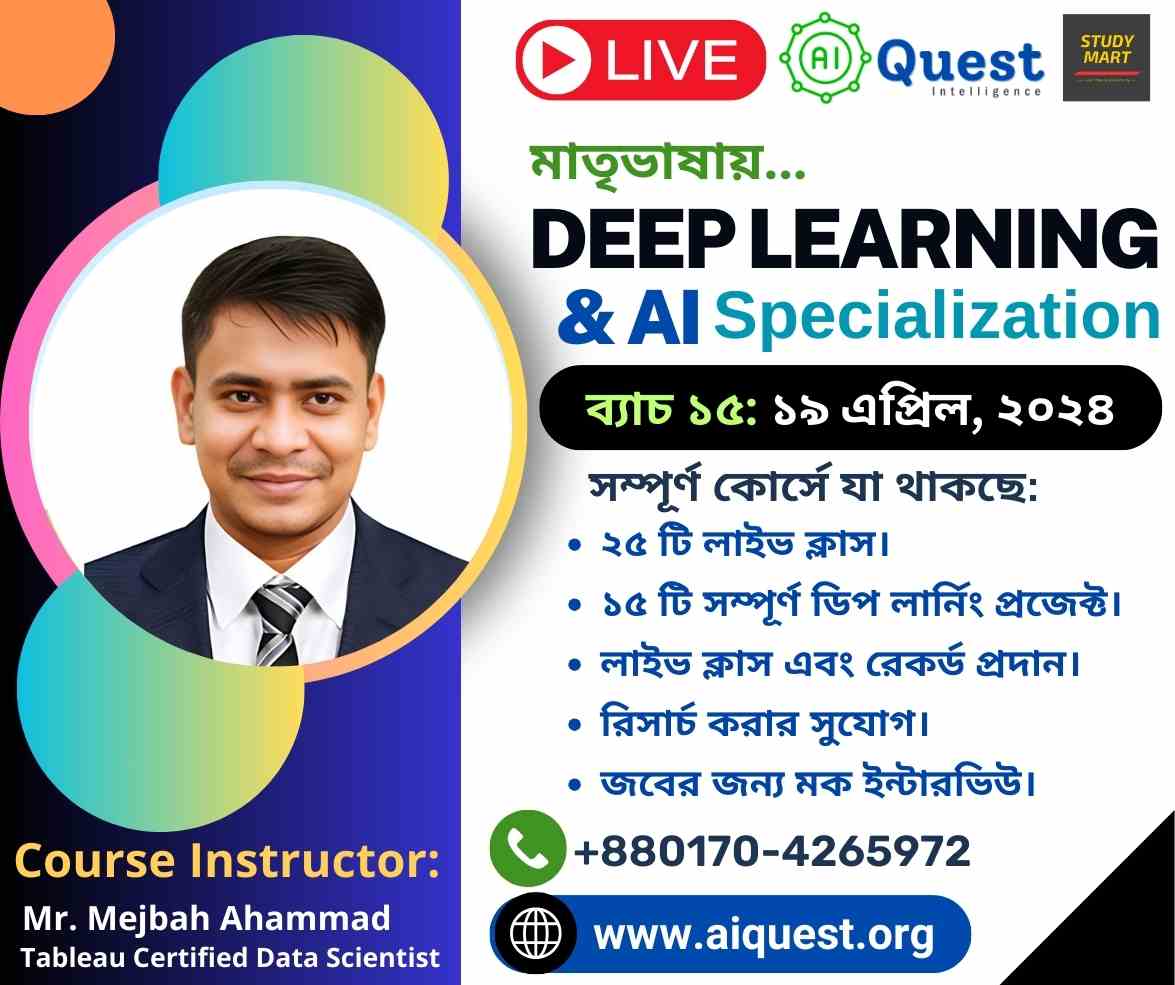

Watch: Top Data Science Job Interviews on YouTube

Kind regards:

Founder of aiQuest Intelligence & Study Mart

.